Random Forests — An Intuitive Understanding

What is Random Forest?

Random Forest is a versatile Machine-learning Algorithm used for making predictions. It’s like having a team of decision-makers, each providing their input to make a collective decision.

Random Forest is highly effective for prediction tasks due to its robustness and ability to handle large datasets. Combining the predictions of multiple trees reduces the risk of overfitting and produces accurate results.

Random Forests are essentially an ensemble of Decision Trees.

Decision trees are

- easy to create,

- easy to implement and

- easy to interpret

Example

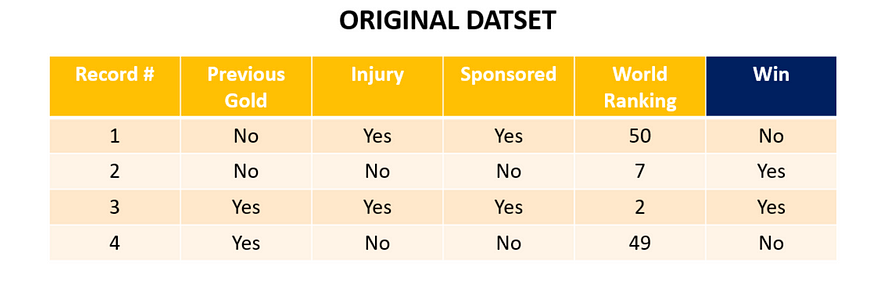

Let’s say we have the Olympics 2020 coming up. Our Random Forest has to predict whether a player will win a medal this year. It takes the following 4 variables into account

- Has the player won a Gold Medal previously?

- Has the player had any recent injury?

- Is there sponsorship available for the player?

- Player’s current world rank.

Also, for example’s sake let’s consider our dataset has only 4 players. Each row is a record/data point. So we already know that players with the ranks 49 and 50 players are predicted to not win any medals. Just the players with rank 2 and 7 are predicted to win.

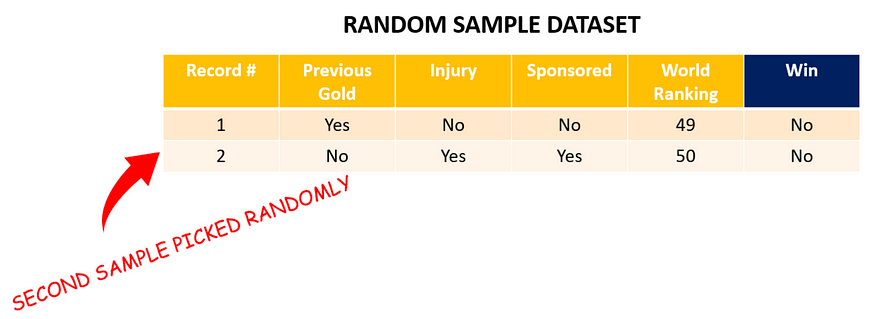

Now let’s create the bootstrapped dataset as step 1 of understanding what Random Forests are!

Let’s begin by choosing one row from the above table randomly. We end up picking row 4. It goes as row/record 1 in the Bootstrapped Table.

Next, we pick row 1 from the original table. Note that the bootstrapped dataset has the same number of data points/rows as the original dataset.

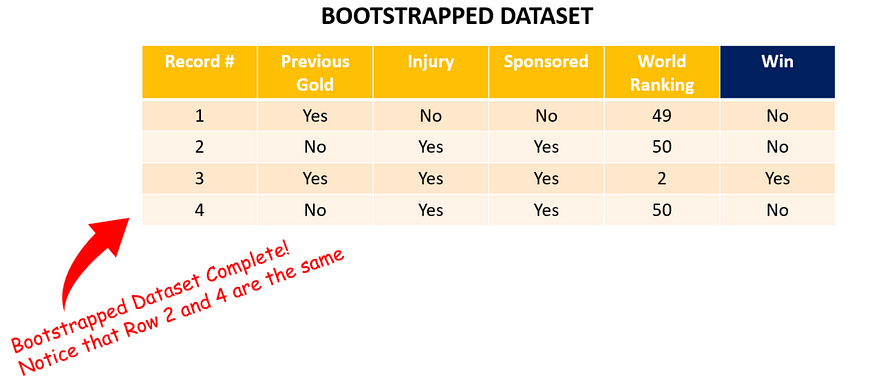

2 more samples later we have our bootstrapped dataset. Note that row 1 is picked twice randomly (it goes as rows 2 and 4 in the bootstrapped table) and row 2 is never picked!!

Step 2 — Create a Decision Tree — Once we have a bootstrapped dataset we create a decision tree from it. Remember the most important thing while creating a decision tree is to know which is the best question to ask.

- Select a random subset (=say 2) of the 4 variables (Previous Gold, Injury, Sponsorship status, and World Ranking) as candidates for the root node.

- Compute the Gini Impurity and figure out the best candidate out of the 2. i.e. which candidate best separates the data.

- For the next node again select a random subset of the remaining 3 variables and repeat the process until no more variables are left. In jargon terms, this is called picking a random subset.

Step 3 — Rinse and Repeat — Once you have 1 decision tree start the whole iteration all over again. Create a new bootstrapped dataset and considering only a subset of variables at each step you can come up with a wide variety of Decision Trees. Keep repeating steps 1 and 2. Ideally, you would need 100s of trees but for representation purposes, we just show 6 trees below. Note each tree comes from a different bootstrapped dataset.

- This randomness in the tree creation process is what lends flexibility and high accuracy to Random Forest

2) Why does it work?

Consider a company that is hiring for the position of CTO. A few people are assigned the role of interviewing the candidates, according to their strengths.

- CEO — Judges the leadership qualities

- Tech. Director — Judges the technological awareness

- HR Director — Judges the personality and cultural fit

- Board Members — Judge the managerial qualities

- A few star employees — Judge depth of knowledge in specific segments

Each of these interviewers has strengths that they have acquired over many years of experience. But these strengths also become biases! Each interviewer is poised to choose a candidate who’s strong in their expertise area.

If it were up to just the Star Employees they would end up hiring a CTO who works great as an independent contributor but might not be a visionary leader (which was the CEO’s role to judge).

Random Forest works similarly. Each tree is an interviewer. Each tree is biased to give results favoring a particular variable. Their combined results although come out to be pretty accurate. The whole company is the Random Forest and churns out more accurate results than an average employee a.k.a Decision Tree

Going with the example Dataset used before. Let’s say a new player registers for the race. His variables are as follows:

- Previous Gold Winner: No

- Any Recent Injury: No

- Sponsorship Available: No

- World Ranking: 7

The question is Will the player win a medal in this race?

Let’s see how and what the Random Forest predicts.

The process given above is called Bagging

Bootstrapping the data & using an aggregate of ‘individual results’ to obtain the ‘final result’ is called Bagging”

Key Takeaways

- Random Forest is a versatile machine-learning algorithm used for making predictions.

- It combines multiple decision trees to produce accurate results.

- Each decision tree in the Random Forest is biased toward specific variables but contributes to the overall prediction.

- Random Forest reduces overfitting and handles large datasets effectively.

- The ensemble approach of Random Forest mirrors the diversity of perspectives in a team, leading to robust predictions.

- Bagging, the bootstrapping data and aggregating individual results, is a key component of Random Forest.

ABOUT THE AUTHOR

Harshit Sanwal

Marketing Analyst, DataMantra